A few months ago I was commissioned to create some background subtraction technology in flash. The project fell through at the time. The final product wasn't as stable as I'd hoped it might be. I thought that since the project fell through I would share some of my findings to see if the collective flash community might have any ideas or suggestions as to how one might implement this kind of technology.

The idea of background subtraction is to separate two parts of an image, the foreground and a "static" background. Here is an example. Given the two images on the left, how does one extract the image on the right. You might think this is fairly easy, just look at the difference in color between the two you might say. Unfortunately it isn't as simple as this.

Firstly illumination and varying camera brightness cause a problem, not only does the foreground constantly change color, but also the background. Most web cams automatically adjust to a white point. You'll notice this if you hold a white sheet of paper against the web-cam, the background will dim. This means that in measuring the difference in RGB color for each pixel it is likely that the whole screen will be completely different from one frame to the next, not ideal if you want to separate out smaller regions!

The method I chose to incorporate was to use multiple color spaces, combined in order to create a new color space which was optimum for this kind of a background subtraction scenario. Here is the program I used to determine the optimum subtraction for each color space. Just click on the image to launch it:

Initially a background is captured, the user steps out of the frame, and a still bitmap image is taken, then for each color space a comparison is made. If the difference between given pixels is greater than a threshold for any color component (or a combination of the three) then the pixel is deemed to be in the foreground. The three color spaces used in this case where YUV, normalized RGB and normalized YUV. The latter of these I haven't seen in use before, but it gives reasonable results.

Once the image has been thresholded an alpha mask is applied to the current web-cam frame, and this image is used as the foreground. This allows some very impressive things to be done. The background can be replaced by an image, or another video (apple make use of this in their software Photo Booth), but more excitingly we now have two image planes, which means 3D!

Here is an example of background replacement:

In the image below you'll see 3D in action (excuse the poor lighting conditions), click to launch it but remember, it probably won't work fantastically. Just click to launch it:

The 3D angle can be controlled by head position (motion tracking) allowing you to look around the person in front of the camera which is quite an interesting effect.

The main issues with the project were that lighting conditions are constantly changing, and can vary hugely from web-cam to web-cam. There are other more computationally intensive approaches which flash may just about be able to handle, but unfortunately my brain cannot. Enjoy the experiment, and let me know how it works with your web-cams!

Showing posts with label webcam. Show all posts

Showing posts with label webcam. Show all posts

Wednesday, 19 January 2011

Multi-Color-Space-Thresholding

Labels:

3D,

AS3,

augmented reality,

webcam

Saturday, 11 September 2010

Real Time Regional Differencing

I little while ago I produced a method for extracting interesting regions from images which I called regional differencing (see post). I have optimised the code, and it now runs in real time. It works very well as a simple edge detector with the ability to produce much thinner lines than the Sobel detector, notice in the image below the maximum line thickness is one pixel. The algorithm works by comparing more and less pixelated versions of an image to find local differences compared to a local mean.

To improve the algorithm I switched from the RGB colour space to NUV as this improved results (albeit reducing performance marginally). The detector doesn't quite have the quality of something like the Canny detector, but it requires a lot less processing which in flash is very important!

Anyway check out the demo by clicking on the link below, the slider changes the pixel size of the comparison bitmap, with some interesting results. The default settings use a size of 2 pixels producing lines of a maximum one pixel thick.

To improve the algorithm I switched from the RGB colour space to NUV as this improved results (albeit reducing performance marginally). The detector doesn't quite have the quality of something like the Canny detector, but it requires a lot less processing which in flash is very important!

Anyway check out the demo by clicking on the link below, the slider changes the pixel size of the comparison bitmap, with some interesting results. The default settings use a size of 2 pixels producing lines of a maximum one pixel thick.

Labels:

AS3,

User Interface,

webcam

Friday, 10 September 2010

Visual Human Interfaces

Making human interfaces intuitive, simple and accurate is a huge challenge. Some of the biggest challenges lie in the field of motion and gesture detection. Analysing visual data in real time can be very processor intensive.

This is a fairly simple implementation of an interface which has the ability to track on screen button presses and swipes (it is part of an ongoing research project):

Click to launch a demo video:

This is a fairly simple implementation of an interface which has the ability to track on screen button presses and swipes (it is part of an ongoing research project):

Click to launch a demo video:

Labels:

AS3,

User Interface,

webcam,

youtube

Monday, 6 September 2010

Crossfade Using Pixelbender

I am currently doing some work for a client which involves background subtraction. I have been getting into using pixel bender to calculate filters in flash for a while now, but doing some research I found there is actually huge scope for using pixel bender to carry out other calculations (see this article).

The following example uses pixel bender as a part of the background subtraction process. Consecutive frames from the webcam are blended with all previous frames. The eventual result is to produce a good estimate of the background pixels. The example uses the blendShader property of a display object with blend mode set to BlendMode.SHADER, and a custom Pixelbender shader that mixes pixels. Check it out (click to launch):

The following example uses pixel bender as a part of the background subtraction process. Consecutive frames from the webcam are blended with all previous frames. The eventual result is to produce a good estimate of the background pixels. The example uses the blendShader property of a display object with blend mode set to BlendMode.SHADER, and a custom Pixelbender shader that mixes pixels. Check it out (click to launch):

Labels:

AS3,

pixel bender,

webcam

Tuesday, 3 August 2010

FLAR Toolkit - First Go

I thought I'd finally get round to having a go with the FLAR toolkit (an augmented reality toolkit for flash). I made a marker which will be used in any AR experiments I do on the site, then quickly made a test program. All I can say is it works quite nicely and I'll be playing around with it in the near future. Click the second image to run the test!

Labels:

3D,

AS3,

augmented reality,

webcam

Sunday, 1 August 2010

Webcam Gestures in Flash

I'm currently creating a game which involves gesturing as the primary control method. For those who aren't familiar with the term gesturing, it was created to describe a set of motions carried out on a touch screen. I thought it would be pretty cool to make the keyboard and mouse completely obsolete and applied my gesturing algorithm to the webcam. Getting timings right with the webcam was the hardest part. You can choose when to start and stop using a mouse, but how do you tell when one gesture ends and the next begins on the webcam.

Click below to watch a video of the experiment in action:

It works by calculating the angles travelled in a gesture. It compares these to a list of angles which relates to a certain gesture. For example for a straight line in the positive horizontal direction this would be 0-0-0-0-0 or in the negative horizontal 180-180-180-180. The program determines which preset gesture the carried out gesture is most like and then performs an action based on this choice. The result is by no means stable, but using a better set of thresholds and presets can improve the performance dramatically.

Click here to launch the experiment and have a go yourself. Remember you'll need a webcam to do so.

Click below to watch a video of the experiment in action:

It works by calculating the angles travelled in a gesture. It compares these to a list of angles which relates to a certain gesture. For example for a straight line in the positive horizontal direction this would be 0-0-0-0-0 or in the negative horizontal 180-180-180-180. The program determines which preset gesture the carried out gesture is most like and then performs an action based on this choice. The result is by no means stable, but using a better set of thresholds and presets can improve the performance dramatically.

Click here to launch the experiment and have a go yourself. Remember you'll need a webcam to do so.

Labels:

AS3,

User Interface,

webcam

Saturday, 17 July 2010

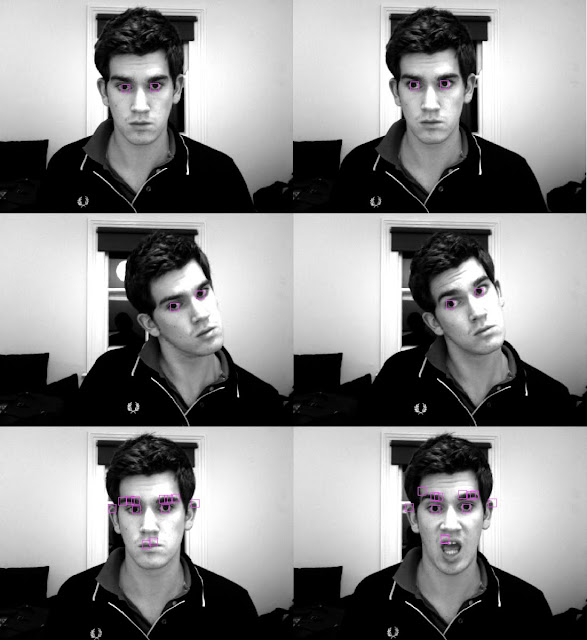

Real Time Feature Point Tracking in AS3

Its a bit late, so instead of posting a long informative post about how feature point tracking works I thought I'd just upload some of my results from this evening. I think its quite exciting stuff. The image shows feature point tracking working relatively well on my pupils, and also on other facial features (the last two images). I am very pleased with the result.

A related project I have also been working on this evening is optical flow which I am close to getting

working, here is a preview of what is to come:

Both methods are used in tracking objects in moving images and have been implemented in real time. I will post sample movies and some explanations shortly so you can play around with them yourself.

Labels:

AS3,

augmented reality,

face recognition,

shape recognition,

webcam

Friday, 2 July 2010

Computer Vision and the Hough Transform

I was working on computer vision yesterday and went to my girlfriend's graduation ceremony today so haven't had a chance to upload. I decided to take another look at computer vision - and in this case a slightly more intelligent one than previously. The method I took a look at is known as the Hough transform. I think that the simplest and most effective way to explain how the Hough Transform works is using a bullet pointed list, but before I begin you should know that it is used to extract information about shape features from an image. In this case a line / all the lines present in an image.

- We start with an image in which we want to detect lines:

- First of all apply a Canny Line Detector to the image isolating edge pixels:

- At this stage, to reduce computation required in the next stage I decided to sample edge pixels instead of using all of them. The results for s=0.2 and s=0.9 are shown below:

- Then, for every possible pair of sampled edge pixels, calculate the angle of the line between it, as well as the distance to the origin. Plot a cumulative bitmap graph of angle against distance. The way I did this was to increase the pixel value in that location each time a distance/angle occurred. Recall that a line can be defined by an angle and distance to an origin. The resulting bitmap is known as the Hough Transform:

- By increasing contrast and playing around with thresholds in the Hough transform it is clear that 8 distinct regions are visible, we know that the detector has done its job as there are 8 lines in the original image:

- Remember that each point provides us with data about the line angle and distance from the origin, so lets use the flash API to draw some lines:

- As you can see the result isn't too bad at all, we get 8 lines, although they are a bit diffuse. The next step is to look into grouping algorithms to only pick single pixel points from the final hough transforms for each lines. In this version of the program a line is drawn for each pixel, which results in an angle and position range for each line. Pretty close though.

So thats how a Hough transform works for line detection. Just for fun, heres a real time hough transform on a webcam stream. Just click the image below to launch it.

Labels:

AS3,

mathematics,

shape recognition,

webcam

Thursday, 18 February 2010

Face Recognition Algorithms

Over the last few days I have been looking at a various algorithms and methods used in face recognition processes. The first method I looked at uses a colour space known as TSL (closely related to HSL - hue, saturation, luminosity). This colour space was developed with the intention of being far closer to the way the human brain sees colours than RGB and other computationally preferred systems. Taking a webcam stream and converting all pixels into TSL colour space with [R,G,B] -> [T,S,L], I found that although in certain lighting conditions the system can differentiate between skin colour and other surfaces, the method is far from ideal. For example the cream walls in my house can often be identified as skin colour, which clearly shows an issue. It is also clear from the images that areas of my face that are in shade are not recognised as skin. These issues are best addressed using methods that do not depend on colour.

In my research I found two viable methods for use in face recognition, using eigenfaces, and fisherfaces.

Eigenfaces seemed to be a more commonly used method, so I decided to follow that route first (albeit roughly, as I'm sure you all know by now I like to do things my own way when I program). In order to recognise a face in an image, the computer has to be trained to know what a face looks like. The first step involved writing a class to import .pgm files from the CMU face database. Here is a sample of what the faces looked like when imported. All images are in the frontal pose, with similar lighting conditions, and varying facial expressions.

The next three steps involved creating an average face, and taking the resulting image and calculating it's vertical and horizontal gradients. The average face simply sums all of the pixels at each location for each face, and takes the average value. To calculate the gradient the difference between two adjacent pixels is taken in either the vertical or the horizontal direction. Computers find it easy to see vertical and horizontal lines (I have already written some basic shape detection software which uses these kinds of algorithms) so I thought this might be a good idea to use these as comparisons with found faces. I planned on using a kind of probability test, with a threshold as to the likeliness that any part of the image is a face, by comparing it to the mean face, the horizontal gradient face, and the vertical gradient face.

The three faces found are shown below for this database. Clearly one could find the mean face of all people wearing glasses, or all men, or all women, and this would affect it's final appearance. Therefore it could theoretically be simple to build in gender testing using webcams (assuming a complete lack of androgyny which clearly there is not....), but a probabilistic approach could still be taken.

The mean face looks incredibly symmetric and smooth. This is perfection folks, and its kind of frightening! The idea behind using these for face recognition is relatively simple. Scan an image taking the difference between an overlaid mean face, and the region of the image being scanned. If the difference is below a threshold it means that the images are similar. This means it is likely that there is a face where you are checking. To ensure it is a face consider the horizontal and vertical gradients of the mean and compare them. If they are similar to within a certain threshold it is very likely you have found a face!

In my research I found two viable methods for use in face recognition, using eigenfaces, and fisherfaces.

Eigenfaces seemed to be a more commonly used method, so I decided to follow that route first (albeit roughly, as I'm sure you all know by now I like to do things my own way when I program). In order to recognise a face in an image, the computer has to be trained to know what a face looks like. The first step involved writing a class to import .pgm files from the CMU face database. Here is a sample of what the faces looked like when imported. All images are in the frontal pose, with similar lighting conditions, and varying facial expressions.

The next three steps involved creating an average face, and taking the resulting image and calculating it's vertical and horizontal gradients. The average face simply sums all of the pixels at each location for each face, and takes the average value. To calculate the gradient the difference between two adjacent pixels is taken in either the vertical or the horizontal direction. Computers find it easy to see vertical and horizontal lines (I have already written some basic shape detection software which uses these kinds of algorithms) so I thought this might be a good idea to use these as comparisons with found faces. I planned on using a kind of probability test, with a threshold as to the likeliness that any part of the image is a face, by comparing it to the mean face, the horizontal gradient face, and the vertical gradient face.

The three faces found are shown below for this database. Clearly one could find the mean face of all people wearing glasses, or all men, or all women, and this would affect it's final appearance. Therefore it could theoretically be simple to build in gender testing using webcams (assuming a complete lack of androgyny which clearly there is not....), but a probabilistic approach could still be taken.

The mean face looks incredibly symmetric and smooth. This is perfection folks, and its kind of frightening! The idea behind using these for face recognition is relatively simple. Scan an image taking the difference between an overlaid mean face, and the region of the image being scanned. If the difference is below a threshold it means that the images are similar. This means it is likely that there is a face where you are checking. To ensure it is a face consider the horizontal and vertical gradients of the mean and compare them. If they are similar to within a certain threshold it is very likely you have found a face!

I'll come back to you when I have some working flash files and source code!

Labels:

AS3,

face recognition,

Flash,

image encoding,

shape recognition,

webcam

Thursday, 22 October 2009

Augmented Reality - Some examples

Hey everyone - I've been playing around with some of my own augmented reality functions - they don't use the FLAR toolkit - but I plan on looking into this sometime soon!

Here are a few images of what I have so far!

Here are a few images of what I have so far!

I'll post an update with some example files for you to play around with!

Update: put some examples up on the server -

Click here for some simple motion tracking

Click here for green object tracking (you'll need something green and don't wear a green jumper!)

or click here for the 3d monkey head example.

Enjoy!

Labels:

AS3,

augmented reality,

Flash,

webcam

Tuesday, 29 September 2009

Saving Webcam Snaps using AS3

In my previous post I briefly discussed how you can add a webcam object to the stage and (if the user has a webcam) show the webcam stream within a flash movie.

Thats all well and good but lets do something with it. Since I've already written a post on saving images from flash lets write a little program to take a snapshot of the webcam and save the image to the users desktop!

So lets have a look how this would work:

We already have the following code to create a webcam on the stage -

var CAM:Camera = Camera.getCamera();

import com.JPGEncoder;

This imports the class we'll need to use to create an image. Next, and just below that add this:

We already have the following code to create a webcam on the stage -

var CAM:Camera = Camera.getCamera();

CAM.setQuality(0, 100);

CAM.setMode(550, 400, stage.frameRate );

var VIDEO:Video = new Video(550, 400);

VIDEO.attachCamera(CAM);

addChild(VIDEO);

So lets add an event listener to the stage for when a user clicks on the image, this will later invoke the function to save the image.

stage.addEventListener(MouseEvent.MOUSE_DOWN, save);

function save(e:MouseEvent):void

{

}

The tool I use to encode image data is called AS3 core lib. This tool has a load of classes but the one we want to use is called JPGEncoder.as, and you can download it here.

So lets import that into our movie. First create a folder, call it "com" and drag your newly acquired file into it. This folder should be in the same directory as you .fla and .swf files. Above all of the other code on the first frame write the following:import com.JPGEncoder;

This imports the class we'll need to use to create an image. Next, and just below that add this:

var jpg_encoder:JPGEncoder = new JPGEncoder(100);

This initiates the class and sets the jpg output quality to 100. Of course if you want to output low quality jpegs you could always change this to 50 or something else.

So we have the jpeg encoder, what this does is converts bitmap data into a byte array which your computer will be able to read as a file. So next we'll create a bitmap, this should have the same resolution as your webcam stream (in this case 550x400).

var bitmap:BitmapData(550,400);

Now, within the save function lets draw the VIDEO object into the bitmap data using the draw() function

bitmap.draw(VIDEO);

If you read my previous post on saving from flash you'll already know exactly how to save files, just in case you missed it here it is -

1) Create a file reference object:fileToSave:FileReference = new FileReference();

2) In your save function encode the jpeg data, we'll use the ByteArray class...

var bytes:ByteArray = jpg_encoder.encode(bitmap);

3) and finally just after that within the save function

fileToSave.save(bytes,"screenshot"+".jpg");

The parameters are data and name, the data being the encoded jpeg and in this case the name is "screenshot.jpg". Note you'll need the extension for your computer to recognise the data as an image.

So here is an example, take a little snap of yourself from your webcam. (The whole file is only 8kb!)

Enjoy guys!

Labels:

AS3,

Flash,

save files,

webcam

Webcam Tutorial - Getting a webcam set up in flash

I've got a few projects lined up which involve using a webcam so I though what better way to start than to give a quick example of how a webcam can be set up in flash. Here is a complete program - check it out. This can be copied into the first frame of the timeline.

var CAM:Camera = Camera.getCamera();

CAM.setQuality(0, 100);

CAM.setMode(550, 400, stage.frameRate );

var VIDEO:Video = new Video(550, 400);

VIDEO.attachCamera(CAM);

addChild(VIDEO);So lets go through this one line at a time.

First the Camera class is initiated and a camera object is created using

var CAM:Camera = Camera.getCamera();Some properties of the camera are then set, the size, quality and frame rate.

CAM.setQuality(0, 100);

CAM.setMode(550, 400, stage.frameRate );Finally an object needs to be created to make the camera appear on the screen so lets attach the camera to the VIDEO object, and then add the VIDEO object to the stage.

var VIDEO:Video = new Video(550, 400);

VIDEO.attachCamera(CAM);addChild(VIDEO);

Just click below for an example of how this works.

In my next few blogs I'll show you some cool stuff you can do with the webcam!

Enjoy guys!

Sunday, 27 September 2009

Webcam Edge Detection

Heres a quick experiment I did to try and just show outlines of an image. Outlines occur where there is a change in colour, i.e along contours with a contrast greater than zero. By shifting two images over one another by a pixel or two in both the vertical and horizontal direction, and then calculating the difference between the two images in there new positions, the outlines can be found.

Just click below to check out an example:

After calculating the difference blend, the contrast is increased using a matrix filter. This method could be used for shape detection.

The next challenge would be to determine points along each contour, and store contour data to actually do something with it, instead of just showing it visually. Enjoy!

After calculating the difference blend, the contrast is increased using a matrix filter. This method could be used for shape detection.

The next challenge would be to determine points along each contour, and store contour data to actually do something with it, instead of just showing it visually. Enjoy!

Wednesday, 10 June 2009

Webcam Studio 1.0

In an earlier post I mentioned briefly the potential for the use of pixel bender filters in flash using actionscript. I decided to make some software for Facebook in which a user could take snapshots of themselves with a webcam while applying some filters (Webcam Studio 1.0) The concept was very similar to that of Photo Booth for mac OSX but with the small difference of the ability to access the software online, from any platform, and upload photos directly to your Facebook gallery. Below are a few screen shots, with a few of the filters, including Pop Art, Fish Eye and Difference Mapping. Another feature that sets Webcam Studio apart from Photo Booth is the ability to adjust Brightness, Contrast and Saturation in real time.

Check out the app here and let me know what you think!

Check out the app here and let me know what you think!Cheers

Sam

Monday, 25 May 2009

Pixel Bender Filters

Just had a little play around with Pixel Bender and it is absolutely fantastic. In this experiment I just recreated 3 bog standard filters, contrast, brightness and saturation, and then made a little posterizer and a sepia filter as well. Creating these kinds of filters is so much easier Pixel Bender than it is using matrices in flash.

All of the parameters can be adjusted and multiple filters can be applied at the same time.

The posterizer has some flaws which I need to sort out but that movie is the result of literally half an hour playing around and getting them all to work.

Next step, saving video snapshots to a server!

Enjoy

The posterizer has some flaws which I need to sort out but that movie is the result of literally half an hour playing around and getting them all to work.

Next step, saving video snapshots to a server!

Enjoy

The posterizer has some flaws which I need to sort out but that movie is the result of literally half an hour playing around and getting them all to work.

Next step, saving video snapshots to a server!

Enjoy

The posterizer has some flaws which I need to sort out but that movie is the result of literally half an hour playing around and getting them all to work.

Next step, saving video snapshots to a server!

Enjoy

Labels:

AS3,

Flash,

pixel bender,

webcam

Wednesday, 13 May 2009

Liquid Webcam

I was just playing around with the convolution and displacement map filters in flash and here are few of the effects I managed to create. The first two use the convolution filter to displace pixels and cause the ripple like effects found. The 3rd just takes a pixel and averages it's height with the heights of surrounding pixels. The displacement map filter is then used to transform a webcam stream. In the first two examples the diffraction effect is actually quite realistic although a custom pixel transform would have to be created for factors such as surface angle to be taken into account.

A word of advice, only run one of these at a time because 3 webcam streams with filters can be quite CPU intensive!

So first we have a ripple effect. Click and drag to create ripples on the webcam stream.

Next we have a rain effect. Random pixels are created which are the convoluted and create the effect of rain drops hitting water.

Another mouse drag effect. Clicking the mouse increases the height of the water. This one is quite an interesting effect, not sure its as aesthetically pleasing as the other two though!

Enjoy!

Labels:

AS3,

fluid dynamics,

webcam

Subscribe to:

Posts (Atom)