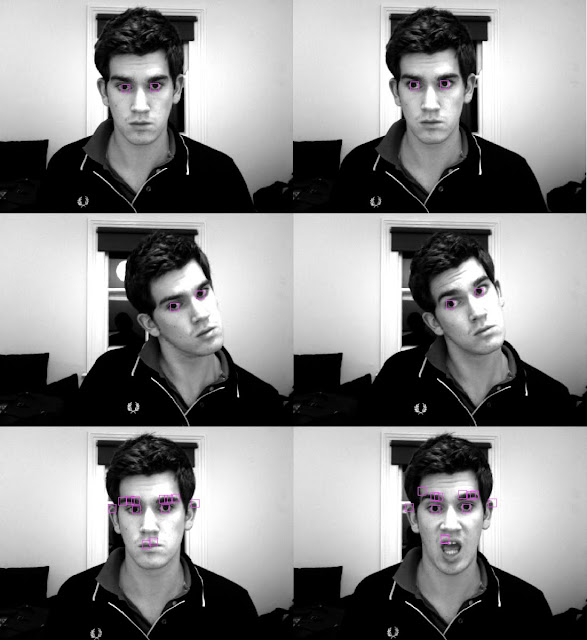

Over the last few days I have been looking at a various algorithms and methods used in face recognition processes. The first method I looked at uses a colour space known as TSL (closely related to HSL - hue, saturation, luminosity). This colour space was developed with the intention of being far closer to the way the human brain sees colours than RGB and other computationally preferred systems. Taking a webcam stream and converting all pixels into TSL colour space with [R,G,B] -> [T,S,L], I found that although in certain lighting conditions the system can differentiate between skin colour and other surfaces, the method is far from ideal. For example the cream walls in my house can often be identified as skin colour, which clearly shows an issue. It is also clear from the images that areas of my face that are in shade are not recognised as skin. These issues are best addressed using methods that do not depend on colour.

In my research I found two viable methods for use in face recognition, using

eigenfaces, and fisherfaces.

Eigenfaces seemed to be a more commonly used method, so I decided to follow that route first (albeit roughly, as I'm sure you all know by now I like to do things my own way when I program). In order to recognise a face in an image, the computer has to be trained to know what a face looks like. The first step involved writing a class to import .pgm files from the

CMU face database. Here is a sample of what the faces looked like when imported. All images are in the frontal pose, with similar lighting conditions, and varying facial expressions.

The next three steps involved creating an average face, and taking the resulting image and calculating it's vertical and horizontal gradients. The average face simply sums all of the pixels at each location for each face, and takes the average value. To calculate the gradient the difference between two adjacent pixels is taken in either the vertical or the horizontal direction. Computers find it easy to see vertical and horizontal lines (I have already written some

basic shape detection software which uses these kinds of algorithms) so I thought this might be a good idea to use these as comparisons with found faces. I planned on using a kind of probability test, with a threshold as to the likeliness that any part of the image is a face, by comparing it to the mean face, the horizontal gradient face, and the vertical gradient face.

The three faces found are shown below for this database. Clearly one could find the mean face of all people wearing glasses, or all men, or all women, and this would affect it's final appearance. Therefore it could theoretically be simple to build in gender testing using webcams (assuming a complete lack of androgyny which clearly there is not....), but a probabilistic approach could still be taken.

The mean face looks incredibly symmetric and smooth. This is perfection folks, and its kind of frightening! The idea behind using these for face recognition is relatively simple. Scan an image taking the difference between an overlaid mean face, and the region of the image being scanned. If the difference is below a threshold it means that the images are similar. This means it is likely that there is a face where you are checking. To ensure it is a face consider the horizontal and vertical gradients of the mean and compare them. If they are similar to within a certain threshold it is very likely you have found a face!

I'll come back to you when I have some working flash files and source code!